On the other hand, work which has very good theoretical ground or motivation, however, lacks the maturity and vast exploration to twist it to beat the current ones, I think are very relevant.Īn example: The SELU paper. This kind of more empirical results I do agree it is ok to just publish the one that does work or at least has indications of whether they work. The first point you make is quite more nuanced in a sense as it really depends on what exactly is the concrete subject.įor instance, we can not just publish every random change to an architecture that someone wakes up in the night with. Unless I've seen cases where the authors of certain papers published in more general than ML journals to start talking about how this is like human brain thinking and all that nonsense which is where I draw the line and call that intentional detrimental PR. So yes, maybe the papers are overhyped, but that is not the always the authors fault, but often the community as well. Other times, you will try a lot of things to follow up and none of the results out of it will be interesting or compelling to deserve a publication.Įspecially these two labs will never publish some work like this as it won't meet their standards of quality. Thirdly, if you have ever done the research you will now - about 80% of it fails.Īnd it does not nessacarily means "fail" in the usual sense, but sometimes there are interesting observations, for which no follow up will work or you will not find anything more to do with them. The progress in AI is going to be slow, nevertheless faster than it was before thanks to tools like Theano, Tensorflow, Pytorch and all the others. Just put back any of the very famous vision models 10 years back and training times will grow from weeks to about a year. Most of the progress in AI so far has been largely due to hardware improvements. Second, all that BS that people read about how fast we are going to get to AI, the exponential improvement in ML blabla, is total nonsense.

I think it was a very interesting and subtle observation which fundamentally is hard to explain why such neuron exists when the network is not trained to have it? Was this pure luck or is there something more fundamental in play? If so what it is, why it is? The unsupervised neuron for instance, is not something that will change the world.

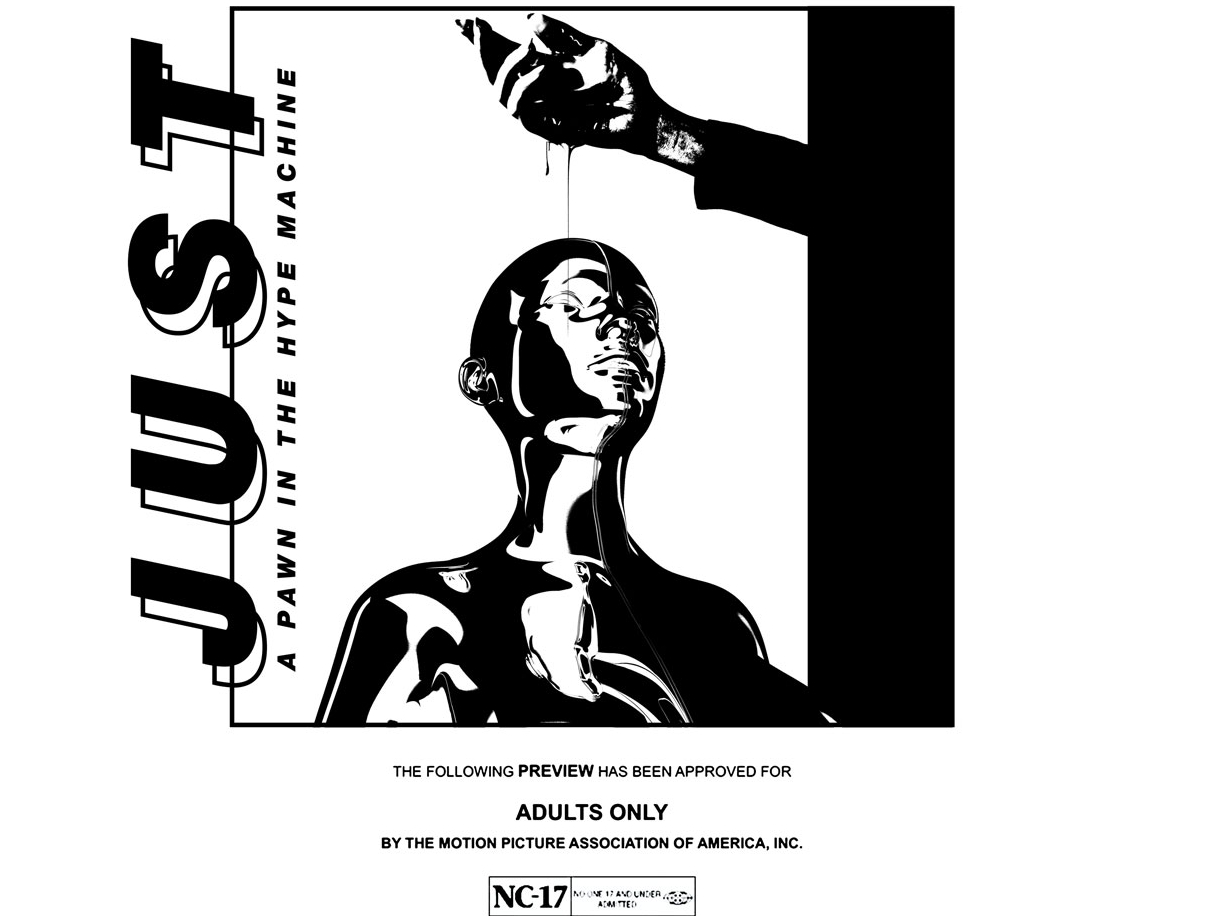

Well you know when you are researching something new you can not beat something that has been grinded over for decades from your first work. In fact, this is exactly what is wrong with the whole community at the moment!Įveryone if he does not see SOTA or some amazing results just cries out load why do we care. It seems though that the author here has never, or barely, done significant research in general, to understand several facts about research.įirst, be damn with your stupid desires for immediate grandiose results, SOTA results are just requesting that every "good" paper has like significant impact. However, most of these papers, at their core, are pretty strong and solid work. Note that this is not necessarily their fault, but notably, researchers in academia don't have so good PR, as well there is a significant fault in the media and the non-academic community, which can latch on these things like flies on. So, I do agree that some of the big labs papers get slightly over-hyped after some PR compared to other work. Metacademy is a great resource which compiles lesson plans on popular machine learning topics.įor Beginner questions please try /r/LearnMachineLearning, /r/MLQuestions or įor career related questions, visit /r/cscareerquestions/ Please have a look at our FAQ and Link-Collection Rules For Posts + Research + Discussion + Project + News on Twitter Chat with us on Slack Beginners:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed